Dominic Cronin's weblog

Another Access Manager gotcha

I'm on a roll today - this is my second blog post about a gotcha in the Tridion Access Manager. The first was quite technical, but this is just really a heads up about something that's fine if you expect it and know what to do.

When you first run Access Manager and you haven't yet added an identity provider, you are still able to undertake various system admin tasks (such as adding an identity provider) without being logged in. The thing is, that as soon as you successfully add an identity provider, that doesn't work any more, so at some point in the process, you have to log in at the identity provider and then you get a confirmation back from Access Manager that the identity provider has been added.

The gotcha is that it doesn't then log your browser session in as the recently confirmed account at the identity provider, so as far as the server is concerned, all that anonymous sysadmin stuff is over, and you need to be using your new account. The documentation does helpfully mention that that "the first idP needs to give Administrator-level access to at least one user", but be careful. If you get it wrong, you can lock yourself out completely. (Fortunately, on my development rig it takes me about 2 minutes to drop the database and run the script to create it again. In a production scenario, you might want to have the steps very clearly mapped out and a fallback plan.)

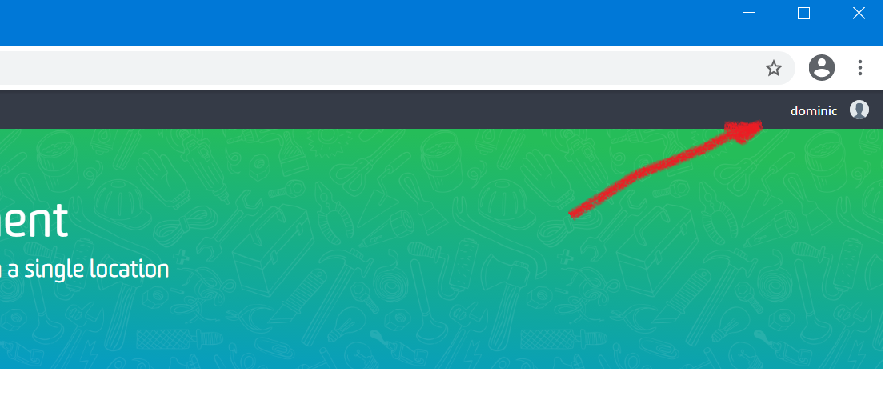

Anyway - back to the gotcha, which is that having added your IdP, you are still not logged in. In the screencap below, you can see how it looks now that I'm successfully logged in with my "dominic" account from the idP, but at this point, it will probably still say Anonymous in the top right corner. If you click on various buttons trying to do things, the browser will get a response that authorization is required, and it will throw up a login dialog. Unfortunately this login doesn't seem to go via the identity provider, so you're left stupidly typing in all the possible combinations of username and password that it might possibly be.

The solution is actually quite simple. Simply close your browser entirely, then open it again and return to the Access Management URL. It will ask you to log in, and you can now successfully log in with your new credentials. So it's not the end of the world, but when you are playing with new stuff, even this kind of headwind is enough to drive you bonkers. I reckon I went round this particular loop five or six times before I eventually got it right. At the same time, I was trying to tweak the identity provider so that the right claims would be added to the ID token to match what was expected for the "Username claim" and the "Full name claim" and it wasn't until I got that far that I realised what the problem was with logging in.

Hopefully this will save someone some hair-pulling.

Keylength gotcha when setting up Tridion Access management.

I'm busy setting up Access Management on my Tridion server. There are quite a lot of moving parts, so I'm working step by step and checking as I go. I've got as far as configuring the details of my identity provider, and I'm about to start kicking the tyres properly, but it's not quite working just yet. My "go to" tool for checking something like this is Fiddler: I want to see what traffic is going backwards and forwards between the Access Manager server and the identity provider, if only to see what's in the JSON, but before I can get to that, I can see that I'm getting some 500 errors when the browser calls the /access-management/connect/token endpoint.

In the response, I can see the following:

IDX10630: The 'Microsoft.IdentityModel.Tokens.X509SecurityKey,

KeyId: '604BDFF176DB97F7C6D42CC4E7252C92F69F6A82',

InternalId: '48edf05d-a5da-4c81-98d7-96a4e08da898'.'

for signing cannot be smaller than '2048' bits. KeySize: '1024'.

(Parameter 'key.KeySize')

It says I should check the logs, and sure enough, the same information is there. I'm guessing that it's trying to sign the token using the certificate I've provided. It's using the Microsoft.IdentityModel library - which is probably quite justified in complaining about an insecure key length. Maybe you'll never come across this problem in production work, but I'd just generated the certificate in OpenSSL using the defaults. Close enough for a dev box, I thought, but apparently not.

So - back to OpenSSL and this time, I've specified the key length when generating the keys.

openssl genpkey -out accmanTokenSigning.key -algorithm RSA -pkeyopt rsa_keygen_bits:2048

With the new keys, it's simply a question of generating a new CSR, signing it, and exporting it to pfx format, copying it over to my server, editing the appsettings.json to point at the new file and a quick IISRESET.

It looks like that's now working, and I can get a bit further with familiarising myself with the intricacies of the relationship between Access manager and the identity provider.

With the best will in the world, there's no way Tridion R&D can catch every possible way in which a library from Microsoft that they use decides to be fussy. To be fair to Microsoft, it's fussy in a good way. For end users like me, it's just one more gotcha to look out for.

The main take away from this is "don't doubt yourself". When you're dealing with an unfamiliar system and it doesn't immediately behave as it should, the temptation is to just throw your hands up and assume it's beyond you to get the incantations right. It's black magic, after all, and you don't understand it. So back to the old mantra: look in the logs, look in the other logs, and look in the logs you haven't thought of". In this case, Fiddler got me there pretty quickly - that had been my starting point, because I didn't know if it was Access manager or the Identify provider causing trouble. Even if the HTTP response hadn't said look in the logs, I would have done so fairly soon. There's always more information if you look for it.

Programatically changing the Publishable flag on a Category

Not long ago I was writing a script which, among many other things, needed to set the Publishable property of a category. In the Tridion user interface, a category has a checkbox, large as life, that says "Publishable". How hard could it be, I thought. :-)

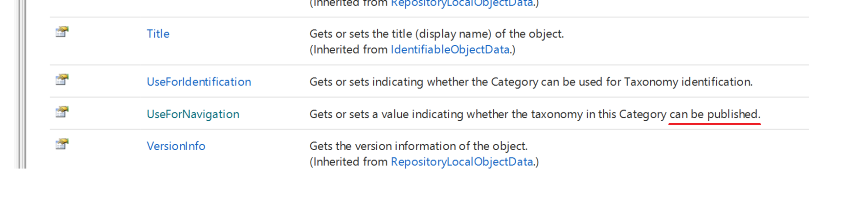

It turns out that when you work with the API (in this case, the core service), it's not called Publishable (or any variation on that), but UseForNavigation.

I kind of get it. Back when categories first could be published, the focus was on using them to build navigations. There's even a note in the documentation that says "Before SDL Tridion 2009 the behavior was get or set whether the taxonomy can be used for navigation."

Well I suppose every product as complex (and powerful) as Tridion will have it's history and quirks. In fact, it only cost me a few minutes to figure this out, so it's not really a problem. I'm still going to file this post under "gotchas" though!

Don't mount your Hyper-V disk and change the contents if it has checkpoints

I just managed to get myself into trouble with Hyper-V. I'm busy setting up a new Tridion image, so I'd started with a fresh Windows Server Essentials install, and then once I had that running, I wanted to copy a handful of installers from the host computer to the new image. What could be simpler than just mounting the VHDX, I thought. Wrong!

So.... mounting the virtual disk is easy, you just right-click on the file, and Windows offers you a Mount option in the context menu. You have to stop the virtual server first, but OK. So I did this - copied my installers over, unmounted the drive with the Eject option from the context menu of what had become E: and went back to start the image again. This promptly failed with various messages that said there was a mismatch between the differencing virtual disk and the parent disk. I hadn't really wanted a differencing disk (which is what it turns out a checkpoint is really called). Checkpoint shmeckpoint... this is not a highly available super reliable server I'm building. Anyway - checkpoints... it looks like it's just journaling; all your edits go in the checkpoint file, and I suppose a restore is just deleting the checkpoint file.

Enough speculation - what it means is that for the disk to work, Hyper-V has to know the order that the various slices are layered on top of each other, and when it does that - it also does some integrity checking. Not a bad thing, you might think, but adding some files had presumably borked a checksum or whatever, and it was throwing up the mismatch message. Apparently you can fix this through the Inspect button in the user interface, but then I got a different error. Fortunately - everything in Hyper-V works from the command line too, so from the powershell I was able to do the following and it was all good again.

Set-VHD .\some_checkpoint_or_other.avhdx -ParentPath .\TheVirtualDisk.vhdx -IgnoreIdMismatch

If you have more checkpoints, you can make each one in turn a parent of the other in the same way.

Mostly - this post is about the fact that it's apparently stupid to mount a VHDX and edit it, and nobody had told me. Next time I'll just run up a share.

Constructing an ImportExport ItemsSelector in Powershell

I've used the Tridion ImportExport API a couple of times from the PowerShell, and until now, I didn't really have any reason to use anything except a Subtree selector for my exports. If you put your items in a bundle, this is what you use, and for the rest, mostly what you want is everything in a folder or structure group. Invoking the constructor of SubtreeSelection usually looks something like this:

$selection = New-Object Tridion.ContentManager.ImportExport.SubtreeSelection $someOrgItemUrl,$true

This is fine because the arguments are both single variables. The trouble comes when you want to construct an Items Selector. Your first attempt probably looks like:

$items = @($itemUrl)

$selection = New-Object Tridion.ContentManager.ImportExport.ItemsSelection $items

You're probably thinking: I only want one item, but the constructor expects an [IEnumerable[string]] so I'll just use the array subexpression operator @() to force my single item to be an array and let Powershell take care of the rest of the magic of casting to IEnumerable. Powershell for the easy life, eh?

But it doesn't work. You get back some message like

New-Object : Cannot convert argument "0", with value: "foobar", for "ItemsSelection" to type "System.Collections.Generic.IEnumerable`1[System.String]": "Cannot convert the "foobar" value of type "System.String" to type "System.Collections.Generic.IEnumerable`1[System.String]"."

So what's going on here? It turns out that Powershell thinks that constructor parameters shouldn't be collections. However you want to imagine that, its type resolution logic ends up converting your collection back to a single item (presumably the first) which the constructor promptly rejects. I went through various hoops trying to force things to be an array, or a single item containing an array. You can create your array either with the subexpression operator @(), or just with a unary comma operator ($foo = ,$itemURl) but I ended up calling split with an empty delimiter. I'm not saying it's pretty, but it worked for me. I then also cast it explicitly to the expected collection type. In Powershell v5, the constructor is available using the static method syntax on the type, and calling the constructor this way is less prone to type resolution magic messing things up. Don't ask me exactly how. I have no idea. Anyway - this is what worked eventually:

[System.Collections.Generic.IEnumerable`1[System.String]]$items = $itemUrl.split('')

$selection = [Tridion.ContentManager.ImportExport.ItemsSelection]::new($items)

I hope this saves somebody some hair pulling and Googling.

Room for a little YAGNI in the DXA :-)

I wouldn't usually call out open source code in a blog post, but honestly, this made me Laugh Out Loud in the office yesterday. I'd ended up poking around in the part of the Tridion Digital Experience Manager framework (DXA) that deals with media items. Just to be clear, the media items in question would usually be either binaries that have some role in displaying your web site, or in this case, more specifically, downloads such as a PDF or whatever. The thing that made me laugh was in a function called getFriendlyFileSize(). A common use case for this would be to display a file size next to your download link so the visitor knows that they can download the PDF fairly quickly, or that maybe they'd better wait until they're on the Wifi before attempting that 10GB ISO file.

getFriendlyFileSize() converts a raw number of bytes into something like 13MB, 7KB, or 5GB. What made me laugh was the fact that the author has also very helpfully included support not only for GigaBytes, but also TeraBytes, PetaBytes and ExaBytes.

Sitting in my office right now, I'm getting about "140 down" from Speedtest. That is to say, my download speed from the Internet is about 140 Mbps, which works out in practice that if I want to grab the latest Centos-with-all-the-bells-and-whistles.ISO (let's say 10GB) it'll take me about 10 minutes. Let's say I want to scale that up to 10PB, then we're talking about 400 years or so, which somewhat exceeds the longest web server uptime known to mankind by an order of magnitude and then some.

Well maybe this is just old-fashioned thinking, but I'm inclined to think we don't need friendly Exabyte file sizes for website media downloads just yet. In the words of the old Extreme Programming mantra, "You ain't gonna need it". (YAGNI)

I'm not here to take a rise out of the hard-working hackers that contribute so much to us all. Really. I can't say that strongly enough. It made me laugh out loud, that's all.

Watch out for this Tridion quantum leap!

With the release of SDL Tridion's Sites 9.1, they've made a startling leap in their platform support for Java.

In Sites 9, Java 8 is supported. It's not deprecated, and Java 11 is not yet mentioned.

In Sites 9.1, Java 8 support is totally gone, and the only supported version is Java 11.

I was certainly caught out. In trying to puzzle out a plausible upgrade path, before I'd actually read through and absorbed all the details, I'd raised a support ticket that turned out to be unnecessary, or at least premature. We'll be going to Sites 9, so the leap to Java 11 is a little further up the road.

I get it that they have all sorts of good reasons for this. The only possible scenario I can think of for not deprecating Java 8 is that they didn't know this was needed when they released Sites 9. It's also true that we have very, very little of the Tridion product actually baked in to the application these days.

I'm still calling this a gotcha.

Discovery service in Tridion Sites nine has two storage configs

I just got bitten by a little "gotcha" in SDL Tridion Sites 9. When you unpack the intallation zip, you'll find that in the Content Delivery/roles/Discovery folder, there's a separate folder for registration, with the registration tool and its own copy of cd_storage_conf.xml. The idea seems to be that running the service and registering capabilities are two separate activities. I kind of get that. When I first saw the ConfigRepository element at the bottom of Discovery's configuration, I felt like it had been shoehorned into a somewhat awkward place. Yet now, it seems even more awkward. So sure, both the service and the registration tool need access to the storage settings for the discovery database, while only the registration tool needs the configuration repository.

The main difference seems to be one of security. The version of the config that goes with the registration tool has the ClientId and ClientSecret attributes while the other doesn't. This, in fact, is the gotcha that caught me out; I'd copied the storage config from the service, and ended up being unable to perform an update. The error output did mention being unable to get an OAuth token, but I didn't immediately realise that the missing ClientId and ClientSecret were the reason. Kudos to Damien Jewett for his answer on Stack Exchange, which saved me some hair-pulling.

I'm left wondering if this is the end game, or whether a future version will see some further tidying up or separation of concerns.

EDIT: On looking at this again, I realised that even in 8.5 we had two storage configs. The difference is that in 8.5 both had the ClientId and ClientSecret attributes.

Getting gvim to work from the Ubuntu on Windows bash prompt

Just lately I've been tinkering a bit more with Linux-y things, among which trying to get to grips with a bit of bash scripting. As my main work environment is a Windows 10 system, the obvious place for such tinkering is in the Windows Sub-System for Linux (WSSL or WSL depending on whose abbreviation you favour). In any case, the bash prompt in Windows.

Generally, WSSL works rather well, <rant>my main proviso there being the really unhelpful problems with permissions. I get it... it's probably a really nasty job to fix it, but really!.... for chmod to be broken is just wrong! More to the point, it means I can't use a private key for ssh logins to other systems. Maybe I'll go back to cygwin after all.</rant>

Anyway, today's problem was rather more tractable. I wanted to edit a bash script using gvim. My first attempt was just to open it from the bash prompt:

dominic@DOMINIC:/mnt/d/code/bash$ gvim foo.sh

E233: cannot open display

Press ENTER or type command to continue

Yeah OK, that then falls back to a standard vim session in the terminal, but if that's what I'd wanted, I wouldn't have typed 'gvim'.

It turns out that there's a version of gvim in the Ubuntu user-space stuff that comes with WSSL. When you type gvim at the prompt, it finds /usr/bin/gvim in the PATH, and tries to open that.

Nil desperandum

dominic@DOMINIC:/mnt/d/code/bash$ file /usr/bin/gvim

/usr/bin/gvim: symbolic link to `/etc/alternatives/gvim'

dominic@DOMINIC:/mnt/d/code/bash$ sudo unlink /usr/bin/gvim

dominic@DOMINIC:/mnt/d/code/bash$ sudo ln -s /mnt/c/Program\ Files\ \(x86\)/vim/vim80/gvim.exe /usr/bin/gvim

After that it worked like a treat. Maybe the other way to go would be to see if you can get an XWindows server running on WSSL, but this got me up and running without having to get into even more faff with copies of rc files and whatnot.

deployer-conf.xml barfs on the BOM

Today I was working on some scripts to provision, among other things, the SDL Web deployer service. It should have been straightforward enough, I thought. Just copy the relevant directory and fix up a couple of configuration files. Well I got that far, at least, but my deployer service wouldn't start. When I looked in the logs and found this:

2017-09-16 19:20:21,907 ERROR NonLegacyConfigConditional - The operation could not be performed.

com.sdl.delivery.configuration.ConfigurationException: Could not load legacy configuration

at com.sdl.delivery.deployer.configuration.DeployerConfigurationLoader.configure(DeployerConfigurationLoader.java:136)

at com.sdl.delivery.deployer.configuration.folder.NonLegacyConfigConditional.matches(NonLegacyConfigConditional.java:25)

I thought it was going to be a right head-scratcher. Fortunately, a little further down there was something a little more clue-bestowing:

Caused by: org.xml.sax.SAXParseException: Content is not allowed in prolog.

at org.apache.xerces.parsers.DOMParser.parse(Unknown Source)

at org.apache.xerces.jaxp.DocumentBuilderImpl.parse(Unknown Source)

at com.tridion.configuration.XMLConfigurationReader.readConfiguration(XMLConfigurationReader.java:124)

So it was about the XML. It seems that Xerxes thought I had content in my prolog. Great! At least, despite its protestations about a legacy configuration, there was a good clear message pointing to my "deployer-conf.xml". So I opened it up, thinking maybe my script had mangled something, but it all looked great. Then some subliminal, ancestral memory made me think of the Byte Order Mark. (OK, OK, it was Google, but honestly... the ancestors were there talking to me.)

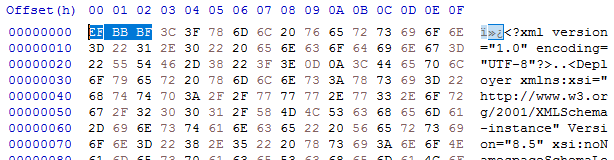

I opened up the deployer-conf.xml again, this time in a byte editor, and there it was, as large as life:

Three extra bytes that Xerxes thought had no business being there: the Byte Order Mark, or BOM. (I had to check that. I'm more used to a two-byte BOM, but for UTF-8 it's three. And yes - do follow this link for a more in-depth read, especially if you don't know what a BOM is for. All will be revealed.)

What you'll also find if you follow that link is that Xerxes is perfectly entitled to think that, as it's a "non-normative" part of the standard. Great eh?

Anyway - so how did the BOM get there, and what was the solution?

My provisioning scripts are written in Windows PowerShell, and I'd chosen to use PowerShell's "native" XML processing, which amounts to System.Xml.XmlDocument. In previous versions of these scripts, I'd used XLinq, but it's not really a good fit with PowerShell as you can't really use XPath without extension methods. So I gave up XLinq's ease of parsing fragments for a return to XmlDocument. To be honest, I wouldn't be surprised if the BOM problem also happens with XLinq: after all, it's Xerxes that's being fussy - you could argue Microsoft is playing "by the book".

So what I was doing was this.

$config = [xml](gc $deployerConfig)

Obviously, $deployerConfig refers to the configuration file, and I'm using Powershell's Get-Content cmdlet to read the file from disk. The [xml] cast automatically loads it into an XmlDocument, represented by the $config variable. I then do various manipulations in the XmlDocument, and eventually I want to write it back to disk. The obvious thing to do is just use the Save() method to write it back to the same location, like this:

$config.Save($deployerConfig)

Unfortunately, this gives us the unwanted BOM, so instead we have to explicitly control the encoding, like this:

$encoding = new-object System.Text.UTF8Encoding $false

$writer = new-object System.IO.StreamWriter($deployerConfig,$false,$encoding) $config.Save($writer) $writer.Close()

As you can see, we're still using Save(), but this time with the overload that writes to a stream, and also allows us to pass in an encoding. This seems to work fine, and Xerces doesn't cough it's lunch up when you try to start the deployer.

I think it will be increasingly common for people to script their setups. SDL's own "quickinstall" doesn't use an XML parser at all, but simply does string replacements based on its own, presumably hand-made, copies of the configuration files. Still - one of the obvious benefits of having XML configuration files is that you can use XML processing tools to manipulate them, so I hope future versions of the content delivery microservices will be more robust in this respect. Until then, here's the workaround. As usual - any feedback or alternative approaches are welcome.