Dominic Cronin's weblog

Connecting to Microsoft SQL Server Developer from Tridion Content Delivery

I've recently been setting up a development image for SDL Web 8.5, and as it's only for use on my development rig, it's fair game to use Microsoft SQL Server Developer edition. It's not supported by SDL, but it's close enough to make it a reasonable risk for my purposes. I got the databases set up and the content manager installed OK, so I moved on to the content delivery stack.

First I hacked together a database test script to make sure I had all the logins correct etc. I've done it this way for years, and you may have seen my blog about it quite a long time ago. Everything seemed fine.

I'd started with the Discovery service, and I'd configured the cd_storage_conf.xml with the relevant database settings I'd just tested. How hard could it be? Except that it didn't work. I got messages in the logs telling me to check my firewall. Doh! Off I went and opened up the firewall ports for my microservices (which I'd forgotten to do) and also 1433 for MSSQL. Still no joy.

Somewhere along the way I'd also disabled loopback checking and double-checked a bunch of other things that can cause trouble. No joy.

I went back to my database test script a few times. It uses a System.Data.SqlClient.SqlConnection to execute a simple command. The connection string specifies '(local)' as the server. I'd had trouble with using '(local)' in the cd_storage_conf.xml in a previous version of Tridion, so I had specified 'localhost' instead, and then when that didn't work, a different name that mapped to the same interface. Still nothing.

The troubling thing was that the test script worked fine. Why was that, when Tridion's java stack had trouble doing the same thing? I should have cottoned on to this way earlier, but eventually I started checking to see if there was actually anything listening on 1433. No there wasn't. Well that helped. And then I started poking around in the network configuration of SQL Server. Sure enough: TCP/IP wasn't enabled. I'm still not sure if this is a Developer edition thing. I seem to recall having come across it before. I'm not the only one. Now that I know the answer, finding a suitable Stack Overflow answer is easy! Maybe I'd had trouble with SQLEXPRESS.

Anyway, at least that explained why my test script worked OK. The SqlConnection client sees '(local)' and is then able to attempt a named pipes or shared memory connection as well as TCP/IP. The java client, on the other hand, doesn't have this repertoire of options and if TCP/IP fails, it's over.

Anyway - now it's fixed. Just time for a quick Note To Self, and on with the rest of my system.

Moving your Tridion databases

As part of setting up my new laptop, I installed MSSQL and obviously I wanted to have my existing Tridion databases available. My Tridion image had previously not had a database - I had that running natively on the old laptop, but I'd decided to go with a more conventional approach and run it in the image with Tridion. This transition had a couple of interesting moments, and hence this post.

Moving the databases and getting MSSQL security working again.

The moving part was fairly simple. I just detached all the databases, and copied the pairs of .MDF and .LDF files over to the new location and attached them.

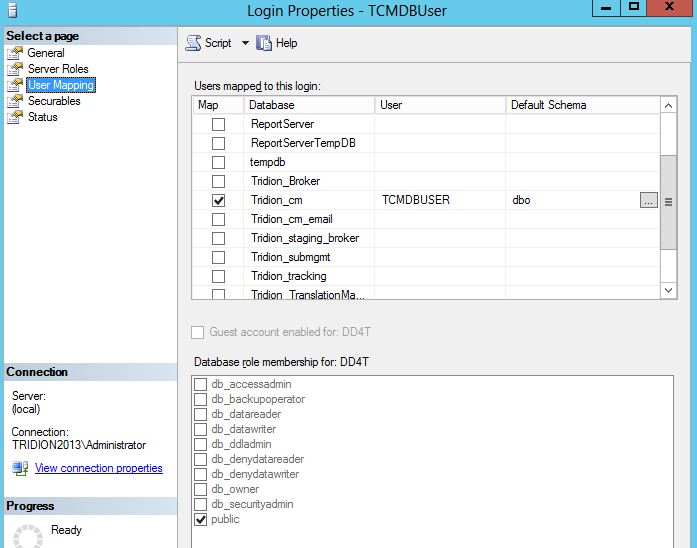

Once you've done this, you'll find that in each database, if you look under Security/Users, you'll find a User with a name that matches the login that you use in your Tridion configuration... for example: TcmDbUser. Unfortunately, this isn't enough. There are (at least) two kinds of User. The one you can see in your database (this is strictly a "database principal") can't be used for logging in. For that you need a "server principal", and these are to be found in your MSSQL instance under Security/Logins. For everything to work correctly, there needs to be a mapping between the database principal and the server principal. You can see this if you look in a correctly configured system. Right click on the login and open the properties, and open up the user mapping page. It should look something like this:

So what we're aiming for is to have a matching Login and database User, with the same name. Creating a Login is easy enough, but if you try to add the mapping by hand in the User Mapping page, it will fail, because it wants to create a database user, and a database user with the same name already exists. (You could delete it, but then you'd have a world of pain trying to figure out all the properties and settings that the Tridion database scripts take care of automatically. I'm not even sure if support would ever talk to you again if you did this.)

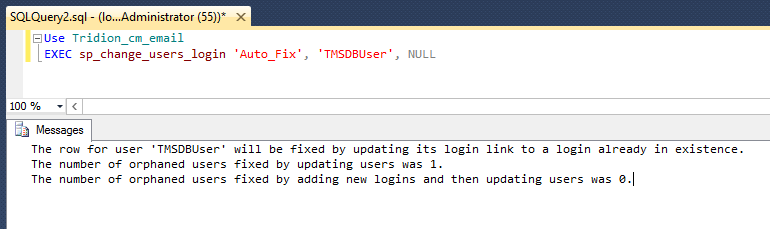

Fortunately, there's a better way. You can do it via SQL with various ALTER USER commands, but then you are going to be deeper into the security features of MSSQL than any normal person ought to wish for. (In this context, DBA's aren't normal, but then they won't be needing to read this blog post, will they?) However, you don't need to figure out all that SQL, because there's a system procedure (sp_change_users_login) that does exactly what you want. As long as your Login and User have the same name, you can just use the Auto_fix method, like this:

Remembering the database settings you'd forgotten about.

So I had all the MSSQL stuff correctly set up, or so I thought, but when I started to try to use the Tridion GUI, I kept getting error notifications in the Message centre.

A network-related or instance-specific error occurred while establishing a connection to SQL Server. The server was not found or was not accessible.

Verify that the instance name is correct and that SQL Server is configured to allow remote connections. (provider SQL: Network interfaces error, 26 - Error locating Server/Instance Specified)

This was pretty odd. I could see most of the GUI working fine, and publications were listed OK, but other lists weren't populated. I speculated that it might only be lists served via service calls that had problems, but when I checked the core service, it was able to list out my entire system. I spent quite some time fiddling with various settings and checking that named pipes etc., were configured correctly, before I eventually got smart enough to check T-REX again.In an old post from 2011, Rick Pannekoek suggested that a similar problem might be caused by the outbound email configuration.

Sure enough - I'd forgotten that outbound email has it's own database configuration (if I'd ever known it - the installer sets it all up and mostly you never need to look there, unless you're actually doing outbound email). Anyway - I certainly hadn't realised that this would break the Content Manager's GUI.

A quick visit to:

C:\Program Files (x86)\Tridion\config\OutboundEmail.xml

and then a bit of fiddling with decrypting and re-encrypting (there are scripts for this that come with the installer), and I had my system in fully working order.

MSSQL TCP Dynamic ports and Tridion Content Delivery

Recently, I was setting up Tridion content delivery on my development server. This runs as a VMWare image on my laptop, while the MSSQL database runs without virtualisation on the same laptop. If you read this earlier post, you will know that I like to have a script to check all my settings in advance (the script uses the standard .NET data access classes). I had done this, and everything was fine, so I was mildly surprised to find that as soon as I tried to publish anything I got error messages about being unable to connect to the database. The relevant part of my storage configuration looked like this:

<Storage Id="brokerdb" Type="persistence" dialect="MSSQL" Class="com.tridion.storage.persistence.JPADAOFactory">

<Pool Type="jdbc" Size="5" MonitorInterval="60" IdleTimeout="120" CheckoutTimeout="120" />

<DataSource Class="com.microsoft.sqlserver.jdbc.SQLServerDataSource">

<Property Name="serverName" Value="WSL117\DEVELOPER" />

<Property Name="portNumber" Value="1433" />

<Property Name="databaseName" Value="Tridion_Broker" />

<Property Name="user" Value="TridionBrokerUser" />

<Property Name="password" Value="topsecret" />

</DataSource>

</Storage>

OK - so all those settings were just the same as in my test script, except that the test script didn't specify the port number, but that's the default port, so nothing to see there, eh?

Anyway - the message was clear, it was something to do with the connection, so I went to check that MSSQL was listening on the expected port with a quick "netstat -oan". Lo - and behold, it was nowhere to be seen. Eventually I discovered that in the Sql Server Configuration Manager you can configure the port, and that there there's a setting called "TCP Dynamic Ports", which was switched on.

At this point, I could simply have configured a static port number and moved on, but I was intrigued. If MSSQL wasn't listening on a static port, how did my test script succeed? OK - it didn't seem too unreasonable that whatever mechanism was in play should be understood by the .NET framework, but could I get it to work from a Java-based system. Well after a bit of Googling, it turned out that there's a service called the SQL Server browser, which lets the client know what port it needs to connect to. Not only that, but it seems the Microsoft JDBC driver, which I was using, also supports this mechanism. I commented out the Property element that specifies the port number, restarted IIS (this was an HTTP Upload site) and sure enough, when I tested it again, everything worked great.

All this: the sweet smell of success, and yet somehow I was still troubled. Why on earth would Microsoft have introduced this dynamic mechanism? After all, it just means more configuration. Extra stuff to tweak. Extra stuff to go wrong. So why? It turns out that the answer is pretty straightforward. This quote from the SQL Server Help explains it all:

Prior to SQL Server 2000, only one instance of SQL Server could be installed on a computer. SQL Server listened for incoming requests on port 1433, assigned to SQL Server by the official Internet Assigned Numbers Authority (IANA). Only one instance of SQL Server can use a port, so when SQL Server 2000 introduced support for multiple instances of SQL Server, SQL Server Resolution Protocol (SSRP) was developed to listen on UDP port 1434. This listener service responded to client requests with the names of the installed instances, and the ports or named pipes used by the instance. To resolve limitations of the SSRP system, SQL Server 2005 introduced the SQL Server Browser service as a replacement for SSRP.

I had installed a named instance of MSSQL alongside the existing SQLEXPRESS instance, so perhaps I should have figured this out myself. Whatever - at least it explains why things are set up this way. I chatted with a colleague from Indivirtual's hosting partner Sentia, and he confirmed that for Tridion infrastructure jobs, one of the tasks they have to do is configure MSSQL to listen on static ports. For a dedicated server, of course, this is the obvious choice.

Still - for the kind of configuration I have for development and research, it's great that the dynamic ports feature works well with Tridion. Of course, that's not the same thing as being a supported configuration. As with any enterprise software vendor, SDL Tridion only generally support configurations they have tested. In order to ensure that this gets "on to their radar", I've created an "idea" on ideas.sdltridion.com. (If you have a login there, you can go and vote it up if you like!) Hopefully in future releases, this will become a tested and supported configuration.

Anyway - there was a time when I did far more infrastructure work than I've done lately. I guess it shows!