Dominic Cronin's weblog

Spoofing a MAC address in gentoo linux

I spent a few hours this weekend fiddling with networking things at home. One of the things I ran into was that the DHCP server provided by my ISP was behaving erratically. Specifically, it was being very fussy about giving out a new lease. It would give out a lease to a Windows 7 system I was using for testing, but not to my Gentoo server. At some point, having spent the day with this kind of frustration, I was ready to put up with almost any hack to get things running. Someone on the #gentoo IRC channel suggested that spoofing the MAC address that already had a lease might be a solution. Their solution was to do this:

ifconfig eth0 down ifconfig eth0 hw ether 08:07:99:66:12:01 ifconfig eth0 up

Here, you have to imagine that eth0 is the name of the interface, although on my system it isn't any more. (Another thing I learned this weekend was about predictable interface names.) You should also imagine that 08:07:99:66:12:01 is the mac address of the network interface on my Win7 system.

The trouble with this is that it doesn't integrate very well in the standard init scripts that get things going on a Gentoo system. Network interfaces are started by running /etc/init.d/net.eth0 (although that's just a link to another script). The configuration is to be found in /etc/init.d/net where you can add directives that control the way your network interfaces are configured. The most important of these are the ones that begin with "config_". For example, to set up a static IP for eth0, you might say something like:

config_eth0="192.168.0.99 netmask 255.255.255.0 brd 192.168.0.255"

or for DHCP it's much simpler:

config_eth0="dhcp"

So my obvious first try for setting up a spoofed MAC address was something like this:

config_eth0="dhcp hw ether 08:07:99:66:12:01"

but this didn't work at all. Anyway - after a bit of fiddling and more Googling (sorry - I can't remember where I found this) it turned out that there's a specific directive just for this purpose. I tried this

mac_eth0="08:07:99:66:12:01" config_eth0="dhcp"

It works a treat. Note that the order is important, which is obvious once you know it I suppose, but wasn't obvious to me until I'd got it wrong once.

The good news after that was that for an established lease, everything worked rather better.

Managing the Tridion Core service powershell module as a git submodule

N.B. Peter has changed the structure of the module (as he has every right to do, and I'm not complaining) - what this means is that this blog post is pretty useless other than as an exercise in poking at things. Maybe I'll figure it out, but in the meantime, assume that this technique won't work.

I spend quite some time fiddling with various powershell implementations on my Tridion image. Whenever there's a place where I do experimental things like this, I run the risk that I'm going to break something so, at the very least, I usually do a quick "git init" in the directory, add the files and commit them. Then I have the benefit of version diffs and rollbacks if I need them. The next step comes when I realise that it's something I'm going to work on over a longer time, and that I really would prefer not to lose. At this point, I usually go on to my linux server and init a bare git, and then push from whereever I'm working.

Today I reached this second phase with the WindowsPowerShell directory of the Administrator account on my Tridion image. (It's about time, because I'm busy preparing a talk for the Tridion Developer summit in a couple of weeks, and well, losing my scripts would put a kink in my plans, to say the least.

In any case, I'd realised that I was running quite an old version of Peter Kjaer's Tridion-CoreService module. This module is the basis of pretty much any effort to use the Tridion core service from the powershell, and as this is the subject of my upcoming talk, I figured I should at least be doing my demos on the current version.

If you go to the github page for the module, you'll see that Peter's provided installation scripts which will help you to get up and running, but of course, if you have git installed, it makes just as much sense to clone the module directly. The only problem I had was that Modules are normally located in the Modules directory under the WindowsPowerShell directory. (You can add other locations to env:PSModulePath, but for what I wanted, that wasn't ideal.)

Fortunately, GIT is widely used for projects that make use of other projects, and there is very good support built in, by way of git-submodule. As my main git repository for the powershell stuff is directly in the WindowsPowerShell directory, all I needed to do was add Peter's module as a submodule with the right path.

In fact I just clicked on the menu option in Tortoise Git, but the basic command looks something like this:

git submodule add --name Modules/Tridion-CoreService git@github.com:pkjaer/tridion-powershell-modules.git Modules\Tridion-CoreService

With this in place, git understands that the Tridion-CoreService code belongs to Peter's module, and if he releases a new version, I can just pull. And of course, my own changes go in my own repository. Adding a submodule adds a .gitmodules file in your repository, so if I ever clone my WindowsPowerShell repository into another server, the location of Peter's repository can be retrieved, and the files pulled from there.

One word of warning. This is not the official release process for the Tridion-CoreService module. That is described here. As the module is pretty much a one-man affair, it's not unreasonable that there's only the master branch, so pulling from it is at your own risk. Personally I'm happy with the small risk, as it helps me to keep my development system a bit tidier - and heck - if it breaks, we'll fix it!

.

Tridion bookmarklet challenge: the entries

Back in July, I issued the Tridion Bookmarklet challenge, and shortly afterwards set up a question on meta.tridion.stackexchange.com to manage the entries and the voting. The closing date for entries was 31 Dec 2014, so we've now had all the entries: a grand total of 13. Over the next month, we'll be collecting votes to see which entry, in the eyes of the community, was the best.

For the first few months of the challenge, there was very little activity. I even got a bit nervous that we'd have hardly any entries. I needn't have worried; it hotted-up nicely towards the end.

So what did we get for entries? Quite a mixed bag actually - and somewhere in there, quite a few interesting technical insights.

The first entry wasn't a bookmarklet at all. Roel Van Roozendaal chose to create a Chrome browser extension instead. This was a re-implementation of the delete message centre messages functionality that had kicked off the whole bookmarklet discussion in the first place, so nothing new from a functional point of view, but his article shows clearly how to wrap up the functionality in an extension. Certainly there's enough there to inspire you if you were thinking of doing something similar.

We also had an entry that was a bookmarklet, but didn't extend the GUI. Well it was already clear that the rules were pretty relaxed - we're more interested in useful, interesting, inspiring... even if I've been known for pedantry in other contexts :-) So go and check out the entry by Jan Horsman (Jan H), which makes it simple to log in to the Tridion documentation site.

We had multiple entries from several people. Robert Curlette (robrtc) entered, Count Items in View, and later Get Schema Id and Title. The first of these really showed the community process in action, with Robert reaching out with a question on Tridion Stack Exchange (TREX). In his second entry, Robert also bravely 'fessed up to his ignorance on TREX and was rewarded. (See how that works, people? He now knows more than he did, and he's helped the rest of us. We need askers as well as answerers - and fortunately Robert does well in both categories.)

Chris Morgan came up with a couple: Get WebDAVUrl and Open Schema. Both of these are going to be useful to Tridion developers doing their daily work.

Frank Taylor (paceaux) also made two entries. He started with PubUp, which simply lets you navigate to where an item is in the BluePrint. Once he'd entered PubUp, the inevitable community interaction took hold, and he was diving into the Anguilla framework with the help of Nuno Linhares. Lo and behold, out of this process, he was inspired also to enter his AnguillaMediator bookmarklet.

Pankaj Gaur created a bookmarklet that would display the file information of a multimedia component. That's not displayed by default in the Tridion GUI, so there will definitely be people who want this. He also did a bookmarklet that would localize an item.

Not everyone did two :-) Rob Stevenson-Leggett did one to rename a Tridion item, (which was always an awkward thing to do), and Jonathon Williams created Get Creation Info. Don't worry guys - one entry is quite enough, and these are both useful offerings and even with two entries, only one can win.

Alexander Orlov (UI Beardcore) also made a single entry, with his Multiple Upload bookmarklet. Alexander is also notable as the person credited with the most "assists" in the challenge. Several people have made use of what we're coming to know as the Beardcore hack to acquire a reference to the Anguilla API in the Tridion GUI.

So thanks to you all for taking the time and trouble to create these bookmarklets and take part in the challenge. It's been great to see the spirit of friendly rivalry, with people learning from each other and even helping to improve competing efforts. I don't know who is going to win, but every single one of you has helped the community by spreading your knowledge and expertise.

Vim Windows weirdnesses

This is just a quick note-to-self to remind me of the stuff I always forget when installing plugins and the like for Vim on a Windows machine. So of course this means gVim. The confusing thing is always that the documentation for everything refers to your ~/.vim directory. And - you haven't got one. Here's the note to self.

Your ~/.vim directory is called vimfiles

And ~ is probably somewhere like C:\Users\dominic - your .vimrc will be there too, so you can find it by running vim and doing

:echo $MYVIMRC

The Tridion bookmarklet challenge: an update

A couple of weeks ago, I issued the Tridion bookmarklet challenge. OK - that sounds pretty grand, but really it's not. It's only that having come across the idea of using bookmarklets to enhance the Tridion GUI, this seemed like a great chance to see what inventiveness people could come up with - so why not a challenge? The day after I issued the challenge, my web server went down, so the initial burst of publicity was kind of wasted. Anyway - I hadn't really thought through the details then, so now's my chance to flesh it out a bit, and attempt another deluge of publicity.

So how is it going to work? Here's how:

What you need to do

- Create a useful and well-constructed bookmarklet which enhances the Tridion GUI in some way. It should work with SDL Tridion 2013, but you may wish to consider making it work for 2011 as well.

- Publish it on-line. You can put it on your own web site, or host it somewhere else. (SDL Tridion world, Google code... whatever - I don't care, as long as it's available via the Internet.)

- Publicise it. You should tweet a link to your entry using #tridionlet. You also need to link to it in an answer to this question on meta.tridion.stackexchange.com. Use whatever other means are at your disposal to publicise your entry.

- Apportion the credit correctly. You can enter as a team if you like - so if one person is responsible for the functional aspects, and another for hacking out gnarly javascript, you should say so. Just as long as it's clear who should get the kudos.

- Get this all done by the end of 31 December 2014.

What happens once you've done this?

The judging will be done by the community. This is the reason why you need to answer the question on meta.

We'll wait until the end of January, which should give people a chance to finish their New Year celebrations, and actually read the code... maybe try the bookmarklets out in real life. There'll be a burst of publicity during January to make sure people think about voting, and then whoever has the most votes by the end of 31 January 2015 will be the winner.

Is it better to wait until January to vote?

Yes - the entries might be improved right up to the deadline. Who knows? Also - some people may prefer not to put their entry on-line until quite late on, or may feel pressured by seeing other entries getting more votes (or less). Entrants: remember that many people will wait until January to vote, so don't read too much into it until then. Voters: I'm putting you on your honour to vote for the best entries. So please don't just vote for your mates, and please don't vote to show some kind of company loyalty. This is personal, not corporate.

One-nine availability

A couple of weeks ago, this site went down. That happens from time to time. It went down just as I left the country to go on holiday, and it could only be fixed via physical access, so it was down for a week. At least one person has commented that maybe I should stop with this silliness of running my own server on an old Gentoo box in the meter cupboard, and get some proper hosting.

The thing is, that when I started this blog, some years ago now, I went through a detailed requirements analysis, and a full MoSCoWMeh matrix. If you aren't familiar with MoSCoWMeh, this is an enhanced variant of the well-known MoSCoW technique, which also accommodates the needs of private and hobby-run systems.

The requirement for reliable hosting and 5-nines up-time was classified as Meh, and has remained so since. So now you know.

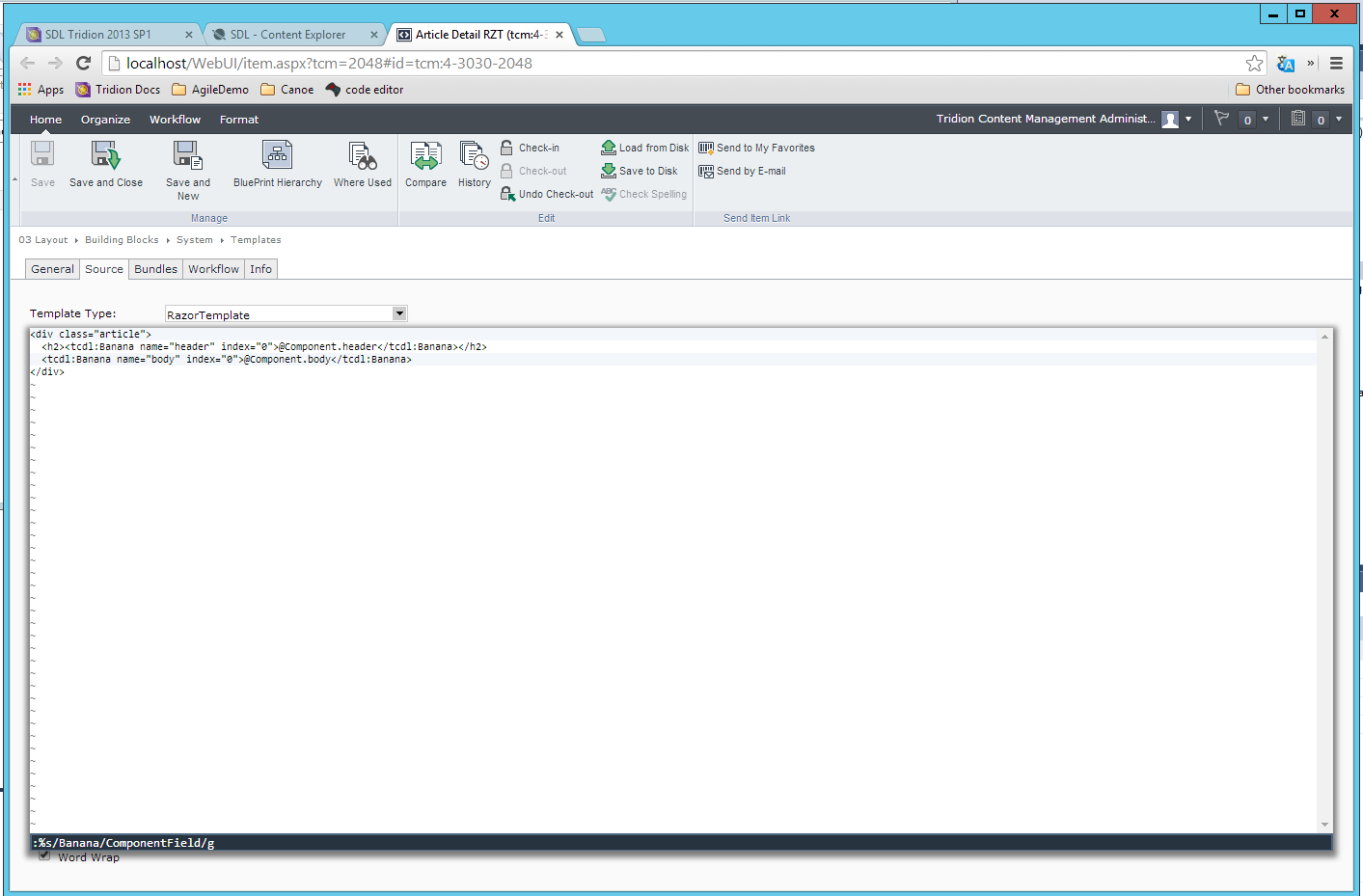

Editing Tridion templates with a little Wasavi sauce

This post is dedicated to all the brave Tridion hackers that struggled through the vbScript years, editing endless templates, most likely in IE6 or worse. How many of us, back in the day, wished for better editing support in the browser? These days, of course, if you have any sense, most of your complex code will be safely tucked away in Visual Studio, but still... who doesn't still occasionally have to carve up a Razor building block, or even DWT? Sure the browsers have all become awesome, but when all is said and done, you're still editing that template in a textarea. Cheer up - that's all about to change!

I first came across Wasavi a couple of months ago - I can't even remember what the context was, but I mentally classified it as an interesting curiosity and moved on. It was still installed in my browser, but I forgot about it until today, when it suddenly came in useful. Oh hang on a sec... what's Wasavi? It's a browser extension that turns each textarea into a vi editor. Cute eh?

OK - I can see what you're thinking... oh yeah - vi. Bloody geeks. And fair enough - I cut my teeth on vi in 1989 running on a nasty old mainframe running Multics. I still like it as an editor, but that probably only indicates a certain level of perversity. So why am I pestering you, my fellow Tridion people, with it? Well - of course, some of you will be as comfortable as I am in grungy old-skool editors. For the rest of you, it may still be useful enough to have it installed in your browser (Chrome, Firefox or Opera... so the first two, eh?)

I offer you.... tada.... Global Search and replace!! As you can see in the screenshot, something awful has happened to my template, and all the instances of "ComponentField" have unfortunately been transformed to "Banana". How to fix this?

<Ctrl>-<Enter> :%s/Banana/ComponentField/g<Enter> :wq

Ctrl-Enter opens up the wasavi view. The line beginning with :%s means "on every line, substitute all instances of Banana with ComponentField", and :wq is vi-speak for write-quit, which is the same as save and close, which returns you to the normal textarea view.

This is one example, albeit a fairly handy one, but vi is capable of much more. The next time you're looking at some awkward editing task - think of wasavi, and just Google for the relevant vi commands.

Edit: Just thought of another cool way to use this.... vi has parenthesis-matching, so if you have to unscramble a badly formatted piece of code, just park on a paren, or any other kind of bracket, and hit % to go to its counterpart. (Full-on vim has good auto-formatting features too, but I'm not sure if the browser implementation does much more than the basics... still - useful.)

Logback could be groovy! But XML FTW

Anyone who works with Tridion content delivery will be familiar with the fact that Logback is used as the logging framework. Recently I found myself looking into this more than I had previously, so here are a couple of observations that might be interesting. The first is that you can use the groovy scripting language instead of XML to write your configuration files. (I'll get to exactly how useful, or otherwise, this might be in a bit...) Anyway - the following is a machine translation of the logback.xml file that ships with Tridion, Now - proponents of the groovy approach will tell us that groovy can be much terser than the XML equivalent. At first sight it doesn't look much different, but I imagine you could factor out the creation of all those appenders to some sort of factory, and then it would look a lot shorter. Can I leave that as "an exercise for the student"? :-)

import ch.qos.logback.classic.encoder.PatternLayoutEncoder

import ch.qos.logback.core.rolling.RollingFileAppender

import ch.qos.logback.core.rolling.TimeBasedRollingPolicy

import java.nio.charset.Charset

import static ch.qos.logback.classic.Level.${LOG.LEVEL}

import static ch.qos.logback.classic.Level.OFF

scan()

def log.pattern = "%date %-5level %logger{0} - %message%n"

def log.history = "7"

def log.folder = "c:/tridion/log"

def log.level = "ERROR"

def log.encoding = "UTF-8"

appender("rollingTransportLog", RollingFileAppender) {

rollingPolicy(TimeBasedRollingPolicy) {

fileNamePattern = "${log.folder}/cd_transport.%d{yyyy-MM-dd}.log"

maxHistory = "${log.history}"

}

encoder(PatternLayoutEncoder) {

charset = Charset.forName("${log.encoding}")

pattern = "${log.pattern}"

}

prudent = true

}

appender("rollingDeployerLog", RollingFileAppender) {

rollingPolicy(TimeBasedRollingPolicy) {

fileNamePattern = "${log.folder}/cd_deployer.%d{yyyy-MM-dd}.log"

maxHistory = "${log.history}"

}

encoder(PatternLayoutEncoder) {

charset = Charset.forName("${log.encoding}")

pattern = "${log.pattern}"

}

prudent = true

}

appender("rollingMonitorLog", RollingFileAppender) {

rollingPolicy(TimeBasedRollingPolicy) {

fileNamePattern = "${log.folder}/cd_monitor.%d{yyyy-MM-dd}.log"

maxHistory = "${log.history}"

}

encoder(PatternLayoutEncoder) {

charset = Charset.forName("${log.encoding}")

pattern = "${log.pattern}"

}

prudent = true

}

appender("rollingCoreLog", RollingFileAppender) {

rollingPolicy(TimeBasedRollingPolicy) {

fileNamePattern = "${log.folder}/cd_core.%d{yyyy-MM-dd}.log"

maxHistory = "${log.history}"

}

encoder(PatternLayoutEncoder) {

charset = Charset.forName("${log.encoding}")

pattern = "${log.pattern}"

}

prudent = true

}

appender("rollingSessionPreviewLog", RollingFileAppender) {

rollingPolicy(TimeBasedRollingPolicy) {

fileNamePattern = "${log.folder}/cd_preview.%d{yyyy-MM-dd}.log"

maxHistory = "${log.history}"

}

encoder(PatternLayoutEncoder) {

charset = Charset.forName("${log.encoding}")

pattern = "${log.pattern}"

}

prudent = true

}

logger("com.tridion", ${LOG.LEVEL})

logger("com.tridion.transport", ["rollingTransportLog"])

logger("com.tridion.transport.HTTPSReceiverServlet", ["rollingDeployerLog"])

logger("com.tridion.transport.transportpackage", ["rollingDeployerLog"])

logger("com.tridion.transformer", ["rollingDeployerLog"])

logger("com.tridion.deployer", ["rollingDeployerLog"])

logger("com.tridion.tcdl", ["rollingDeployerLog"])

logger("com.tridion.event", ["rollingDeployerLog"])

logger("com.tridion.monitor", ["rollingMonitorLog"])

logger("Tridion.ContentDelivery", ${LOG.LEVEL}, ["rollingCoreLog"])

logger("com.tridion.preview", ["rollingSessionPreviewLog"])

logger("com.tridion.storage.persistence.session", ["rollingSessionPreviewLog"])

root(OFF, ["rollingCoreLog"])

So why might this be interesting to Tridion infrastructure specialists? Well it isn't. Not at all. At least not right now - because doing it this way requires the groovy runtime to be available, and that isn't in a standard Tridion content delivery setup. I attempted a trivial hack by dropping a couple of the groovy jars in place, but no joy. Realistically, this would only be a practical approach if Tridion decided to build it into the product and support it. I imagine the dev team puts quite some effort into keeping their dependency tree as clean as possible, so this might come under the heading of stuff that would only get added if people really, really wanted it!

Anyway - I love the smell of XML in the morning, so it's all the same to me. So on with the useful part of this post. If you check out exactly how logback gets its configuration settings, you'll see that before it picks up logback.xml, it first looks for a file called logback-test.xml. I'm happy to say that this does work out-of-the-box. This means that when you come across a server where you need to debug a problem, and its standard logging settings need to be boosted up to DEBUG, you don't have to edit the existing config file. Just drop your insanely debuggy logback-test.xml file in next to logback.xml (and restart things) and Bob's your uncle. When you're done, just delete (and restart). Even the restarting might be optional - another feature of logback is that you can configure it to scan for configuration changes, although I have no clue whether it would then pick up the existence of logback-test.xml)

Ok - this is such a minor benefit over copying and renaming that it hardly justifies the deaths of all those IP packets that were bravely lost in transmission during the serving of this web page. Whatever.... that's the thing with research, eh? Negative results are also important to report. In short - logback.groovy looked cool, but won't work - and maybe carrying a customised logback-test.xml around in your toolkit might be handy, but then again, maybe not.

I'll sign off with one more public service announcement. I recently saw someone using a logback configuration that specified a logging level of ON. Apparently they had been advised to do so by someone who ought to have checked first. The possible values are OFF, ERROR, WARN, INFO, DEBUG and TRACE. Anything other than that will not be recognised, and you'll get DEBUG logging, which is the default if that happens.

What not to do when upgrading to Grub2

I'd been following the Gentoo Wiki guidance on upgrading Grub, and had been taking it very carefully. I'd worried about getting this right, as getting it wrong would leave me with a brick, so I'd been very pleased to see the notes on using the old bootloader to chain load the new one. That way I could check that my configuration was correct before taking the plunge of installing the new version into the Master Boot Record. I didn't want to automatically generate the new config file, as I didn't trust it. (Rightly so as it turned out, because my initrd files didn't follow the strict naming requirements, so weren't picked up by the config generation script) Anyway - the hand-written config was half a dozen lines long, and the generated one was utterly incomprehensible.

So anyway - I managed to create the config file, and get everything set up for chain loading. I rebooted the server, and bingo - there was the chain loader entry in my "old" boot screen, and when I followed it, I got the new menu and could boot the server. Great stuff! Now it should have been a simple question of running grub2-install, and I'd be finished. So I did this, and then.... the computer wouldn't start. Fortunately I had a grub prompt, so grub was "working" - but it obviously couldn't find its config file. I already knew that with the right incantations it might be possible to get the thing to boot without a config file, and after a bit of googling, I got enough clues to attempt it. (For the record, what I think I'd done wrong was to fail to remount /boot after my chain test and before running grub2-install, with the result that grub then didn't know how to correctly find /boot.)

It took a few attempts, but the command line completion in grub helps a lot. This is what I eventually ended up typing at the grub prompt to get a working boot.

grub > set root=(hd0,1) grub > linux /kernel-gen-newudev-3.3.8-gentoo root=/dev/sda3 grub > initrd /initramfs-gen-newudev-3.3.8-gentoo grub > boot

Note that the root for the boot loader is different from the root of the operating system, so you have to specify them separately. Obviously YMMV for the names of the kernel and initrd files, not to mention device identifiers.

But the real advice here is to avoid missing out that crucial mount operation!!

Gentoo emerge dies with 'failed to open /dev/urandom' when wrong default python is configured.

So there I was - just for fun building my new Gentoo system, when all of a sudden, I wasn't. Building, that is. I wasn't building anything. In fact, part of the motivation for a clean build had been that emerging new things was getting tiresomely fragile. Anyway - here's what happened when I tried an emerge. The interesting part is where it says: Fatal Python error: Failed to open /dev/urandom

>>> Emerging (1 of 18) sys-libs/glibc-2.17

* Fetching files in the background. To view fetch progress, run

* `tail -f /var/log/emerge-fetch.log` in another terminal.

* glibc-2.17.tar.xz SHA256 SHA512 WHIRLPOOL size ;-) ... [ ok ]

* glibc-2.17-patches-8.tar.bz2 SHA256 SHA512 WHIRLPOOL size ;-) ... [ ok ]

make -j2 -s glibc-test

make -j2 -s glibc-test

>>> Unpacking source...

* Checking gcc for __thread support ... [ ok ]

* Checking kernel version (3.3.8 >= 2.6.16) ... [ ok ]

* Checking linux-headers version (3.9.0 >= 2.6.16) ... [ ok ]

>>> Unpacking glibc-2.17.tar.xz to /var/tmp/portage/sys-libs/glibc-2.17/work

>>> Unpacking glibc-2.17-patches-8.tar.bz2 to /var/tmp/portage/sys-libs/glibc-2.17/work

* Applying Gentoo Glibc Patchset 2.17-8 ...

* 0035_all_glibc-2.16-i386-math-feraiseexcept-overhead.patch ... [ ok ]

* 0059_all_glibc-2.19-make-4.0.patch ... [ ok ]

* 0065_all_glibc-2.18-qecvt-guards.patch ... [ ok ]

* 0070_all_glibc-2.18-localedef-page-align-1.patch ... [ ok ]

* 0071_all_glibc-2.18-localedef-page-align-2.patch ... [ ok ]

* 0072_all_glibc-2.18-localedef-page-align-3.patch ... [ ok ]

* 0085_all_glibc-disable-ldconfig.patch ... [ ok ]

* 0090_all_glibc-2.17-arm-ldso.cache.patch ... [ ok ]

* 1005_all_glibc-sigaction.patch ... [ ok ]

* 1008_all_glibc-2.16-fortify.patch ... [ ok ]

* 1040_all_2.3.3-localedef-fix-trampoline.patch ... [ ok ]

* 1055_all_glibc-resolv-dynamic.patch ... [ ok ]

* 1505_all_glibc-nptl-stack-grows-up.patch ... [ ok ]

* 1506_all_glibc-2.17-hppa-fpu.patch ... [ ok ]

* 1507_all_glibc-2.17-hppa-ldso-flag.patch ... [ ok ]

* 1507_all_hppa-ia64-DL_AUTO_FUNCTION_ADDRESS.patch ... [ ok ]

* 1508_all_glibc-2.17-hppa-futex.patch ... [ ok ]

* 1508_all_hppa-fanotify_mark.patch ... [ ok ]

* 3020_all_glibc-tests-sandbox-libdl-paths.patch ... [ ok ]

* 5063_all_glibc-dont-build-timezone.patch ... [ ok ]

* 6024_all_alpha-fix-signal-thunk-unwind-info.patch ... [ ok ]

* 6230_all_arm-glibc-hardened.patch ... [ ok ]

* Done with patching

* Using GNU config files from /usr/share/gnuconfig

* Updating scripts/config.sub [ ok ]

* Updating scripts/config.guess [ ok ]

>>> Source unpacked in /var/tmp/portage/sys-libs/glibc-2.17/work

Fatal Python error: Failed to open /dev/urandom

/usr/lib/portage/bin/phase-functions.sh: line 87: 4204 Aborted "${PORTAGE_PYTHON:-/usr/bin/python}" "${PORTAGE_BIN_PATH}"/ilter-bash-environment.py "${filtered_vars}"

* ERROR: sys-libs/glibc-2.17::gentoo failed (unpack phase):

* filter-bash-environment.py failed

*

* Call stack:

* ebuild.sh, line 714: Called __ebuild_main 'unpack'

* phase-functions.sh, line 993: Called __filter_readonly_variables '--filter-features'

* phase-functions.sh, line 137: Called die

* The specific snippet of code:

* "${PORTAGE_PYTHON:-/usr/bin/python}" "${PORTAGE_BIN_PATH}"/filter-bash-environment.py "${filtered_vars}" || die "filter-bash-enviroment.py failed"

So what was going on here? Well as it turned out, my system has three versions of python loaded, and Gentoo's portage system (of which emerge is part) seems to rely on you not using python 3. After a short bit of fiddling with "eselect python list" and "eselect python set", to get the default python back to 2.7, the build ran like a charm.

So anyway - this has got to count as the most bizarrely mis-reported error in my most recent years. "/dev/urandom" was working fine. I could start it and stop it ("/etc/init.d/urandom stop" and so forth) and I could use it to access randomness. Why then did I get the "failed to open" message with one version of python, and not with another. Answers on a postcard? Whatever - this was a public service announcement.